For years, big tech had a reliable defense whenever lawmakers asked them to keep kids off adult platforms: it’s too hard, too risky, too inaccurate, too invasive.

That excuse is evaporating.

A fast-growing wave of child online-safety laws is now forcing social networks, AI chatbots, and adult-content sites to prove they can actually enforce age limits — because governments increasingly believe the technical barriers are no longer real barriers.

What changed isn’t only politics. It’s the tech.

The turning point: “Age assurance” is no longer experimental

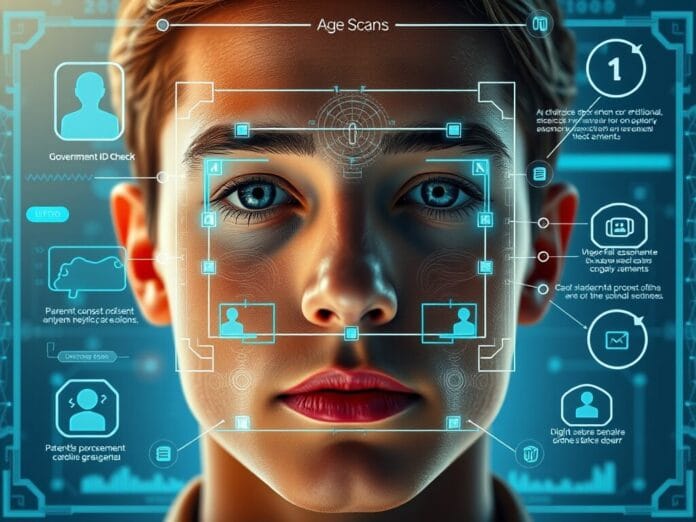

A whole industry has matured around age assurance — tools that estimate or verify someone’s age using a layered approach:

- facial age estimation (face scan)

- government ID checks (automated, machine-read)

- parental consent or approval flows

- “inference” from digital signals (account history, behavior patterns, linked info)

Instead of relying on a single “age gate” that kids can bypass with a fake birthday, platforms are stacking multiple signals — and switching methods depending on how close someone appears to be to the cutoff.

Why it’s suddenly feasible: AI made it cheaper and more accurate

Recent improvements in AI have done two things at once:

- Accuracy improved

Face-scanning age estimation has steadily reduced its average error over the past decade. In practical terms, it’s getting better at distinguishing “clearly underage,” “clearly adult,” and the messy middle. - Cost dropped dramatically

Age checks that used to be expensive enough to reserve for high-stakes transactions can now be run cheaply at scale. For basic automated checks, the price per verification can be well under a dollar — and in high volumes, it can drop to mere cents.

That’s the quiet revolution: when age checks become cheap enough, platforms can’t claim they’re economically impossible anymore.

The “grey zone” problem: teens near the cutoff

Even the best systems still face a hard reality: humans don’t age in clean categories. The closer someone is to the cutoff (say, within a few years), the harder it gets.

That’s why emerging enforcement models are becoming more “bar-style”:

- If you look clearly adult → let you through

- If you look clearly young → block or divert

- If you’re near the cutoff → require stronger proof (ID or parental consent)

It’s not perfect. But it’s increasingly defensible.

What platforms are doing differently now

Social media companies are leaning on something porn or gambling sites don’t have: massive user context.

Instead of forcing constant face scans, they often use “inference” — signals like:

- when an account was created

- what content the account engages with

- patterns that suggest underage behavior

Then they apply higher-friction checks (like face scans or ID verification) only when the system flags uncertainty.

In other words: fewer hard checks for everyone, more checks for accounts that raise red flags.

The arms race: kids will try everything

The moment age gates become real, kids start testing the edges. The tricks are exactly what you’d expect — and some you wouldn’t:

- masks

- heavy makeup or fake facial hair

- trying to scan something that isn’t their face (even plastic “faces” from toys)

There’s also a key tension in how checks are run:

- Cloud-based checks can be more robust (better detection, more compute).

- On-device checks are more privacy-preserving (data stays on your phone), but can be less effective at catching deception.

So the industry is juggling two competing demands: privacy vs enforcement strength.

Early results: bans create numbers fast — but interpretation is tricky

Where strict age rules have already kicked in, platforms say they’ve locked or removed millions of suspected underage accounts within weeks.

But early enforcement data can be misleading:

- some accounts weren’t active

- some were blocked from logging into related services

- companies may aim for the minimum compliance needed to avoid penalties

There’s also a deeper political wrinkle: platforms don’t necessarily want these laws to spread globally — so they’re not always motivated to make them look like a flawless success story.

The real story: the “impossible” argument is collapsing

The biggest shift is psychological and regulatory:

- Governments are more confident age checks can work.

- Vendors now have benchmarks, standards, and certification schemes that didn’t exist a few years ago.

- App stores are also rolling out ways for parents to share a child’s age range with developers, reducing friction.

The result: the debate has moved from “can it be done?” to “how strict will enforcement be — and how much privacy will society trade for it?”

Bottom line

Age-checking tech isn’t perfect — but it’s no longer a punchline. The industry has improved enough that lawmakers are treating age limits as enforceable, not aspirational.

The next fight won’t be over whether age assurance works.

It’ll be over:

- who gets scanned and how often

- what data is collected and where it’s stored

- whether enforcement unfairly impacts certain users

- and whether platforms comply aggressively—or drag their feet until regulators force their hand