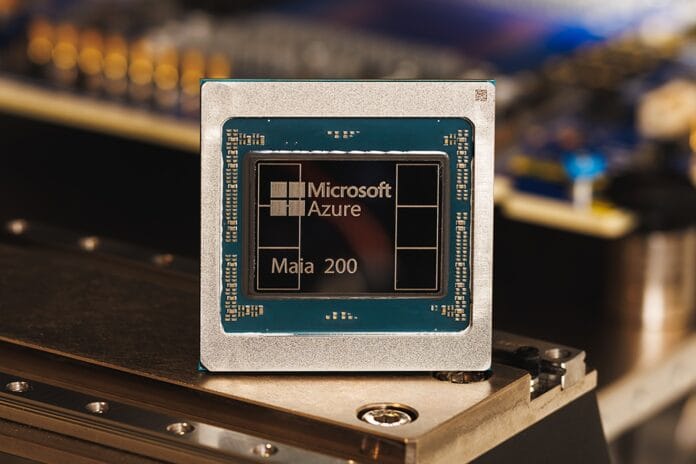

Microsoft just pushed its AI strategy into a sharper, more aggressive phase: it unveiled Maia 200, the second generation of its in-house AI chip, and paired it with a new software toolkit designed to weaken Nvidia’s biggest advantage — the developer ecosystem.

This isn’t just “another chip launch.” It’s Microsoft signaling that the AI future can’t run forever on rented infrastructure.

What Microsoft launched

The headline is Maia 200, Microsoft’s next-gen custom AI accelerator. It’s already being switched on this week inside a Microsoft data center in Iowa, with a second deployment planned for Arizona. In other words: this isn’t a lab prototype — it’s going into production.

The broader message is clear: the cloud giants are done being “just customers.” They want to become competitors.

The real target isn’t the GPU — it’s CUDA

Nvidia’s dominance isn’t only about hardware performance. It’s about CUDA — the software platform that developers build on, optimize for, and don’t want to abandon once their stack is working.

Microsoft is trying to punch into that fortress by supporting Triton, an open-source programming tool (with major contributions tied to OpenAI) meant to help developers run AI workloads across different chip architectures — not just Nvidia’s.

Translation: Microsoft wants the AI world to become more portable, so switching chips becomes a choice, not a nightmare.

What’s inside Maia 200 (and why it matters)

Maia 200 is built using TSMC’s 3-nanometer process, placing it in the modern high-performance tier. It also uses high-bandwidth memory (HBM) — critical for AI workloads — though not the very latest memory generation coming with Nvidia’s newest flagship chips.

But Microsoft isn’t trying to win a spec-sheet war with Nvidia on day one. It’s trying to win on efficiency at scale.

One standout design move: Maia 200 includes a lot of SRAM (a faster type of memory). That matters especially for inference — the moment when AI models serve answers to millions of users in real time. SRAM-heavy designs can reduce bottlenecks when systems are flooded with requests.

That’s a playbook borrowed from newer AI chip challengers who focus on speed-per-dollar in “real world usage,” not just benchmark flexing.

Why Microsoft is doing this now

Because AI is becoming infrastructure, and infrastructure is too expensive to outsource forever.

Cloud giants like Microsoft, Google, and Amazon are producing their own chips for three reasons:

- Cost control: training and running AI is wildly expensive

- Supply security: the world can’t rely on one vendor pipeline

- Competitive leverage: owning the stack means better margins and tighter product integration

And the most important one:

If you control the chip + the software layer, you control the platform.

What this means for Nvidia

This is not “Nvidia is finished.” Nvidia still has the deepest ecosystem, the strongest developer lock-in, and huge momentum.

But the direction is undeniable: Nvidia’s biggest customers are building exit ramps.

Even if Maia 200 only powers a portion of Microsoft’s internal workloads at first, it gives Microsoft something priceless: options. And in the AI arms race, options are power.

Bottom line

Maia 200 is Microsoft placing a bet on a future where AI compute becomes like electricity: too strategic to depend on one provider.

The chip is important.

But the software move is the real provocation.

Microsoft isn’t just trying to run AI cheaper — it’s trying to make the AI world less Nvidia-shaped.